Maximum Likelihood Estimation of Network Size by N Parallel k-lookups in Kademlia DHT

1. Introduction

The lookup (often informally referred to as "k-lookup") is the primary and most crucial function in the Kademlia peer-to-peer distributed hash table (DHT) protocol [1]. It is a recursive, iterative algorithm designed to locate the closest nodes to a specific target ID within the network. The final set of closest nodes found has XOR distances that are minimised within the network, and the magnitude of these distances is related to the density of nodes, which itself depends on network size . It's clear that performing several lookups to random target nodes, we get more information about anticipated network size. A method of estimation by processing parallel k-lookups is proposed in [2]. It's based on the fact that distances in each ordered set of k-lookup are the order statistics with specific beta distributions which depend on . Then, according to the method of moments (MoM) [3] the estimator for is obtained by matching the expected value of the beta distribution with the empirical result by averaging distances from lookup data. The suggested estimator is great from a practical point; however, some aspects of the provided solution are heuristic, e.g., the ways of averaging sample data. It's also hard to make assumptions on the efficiency of the MoM estimator, because there is no way of getting theoretical minimum variance for any unbiased estimator.

Using the same model as in [2], the current work considers Maximum Likelihood Estimation (MLE) [4]. The MLE is generally preferred over MoM because it produces more efficient, consistent, and often unbiased estimators with stronger theoretical properties. MLE requires no heuristic assumption and can be derived directly from the joint Probability Distribution Function (PDF) of distances from N parallel k-lookups. The joint PDF also allows us to get the Cramér-Rao bound [5] for minimal variance of the efficient estimator.

The main results of the work are:

- the MLE estimator (3), which uses the average of logarithms of normalised distances from random -lookups;

- the Cramér–Rao bound (4) for minimal variance of the efficient estimator and its approximations (6-7);

- illustration of the efficiency of the MLE estimator by results of computer simulation.

2. The model

Kademlia distance estimation works by querying "closest" nodes to a random target ID.

Let be XOR distances from a probe node to all network nodes, and are normalised distances for -bit addresses. are modelled as independent random variables uniformly distributed on .

The result of a single lookup is the ordered set of smallest distances selected from . Here is the distance to the -th closest node. So, we observe the first order statistics [6] from a sample of normalised distances .

When performing several k-lookups to N random probes, we make an assumption that all N samples of distances (so, the observed order statistics) are independent.

3. The likelihood function

For a single lookup the likelihood function (LF) is the joint PDF of the first order statistics from a sample of size :

We derive this later, but notice an important fact now: the optimal ML estimate of can be obtained by taking into account only -th order statistics. The statistics up to are irrelevant to the estimate. In other words, to estimate the network size, only the last k-th distance in ordered lookup results is sufficient.

This conclusion can be generalised for the optimal Bayesian estimator, because the LF as a part of the posterior distribution is the only place which captures the observation.

Note that the heuristic solutions proposed in [2] take into account all distances from the lookup result.

Using simplified notation, true in the area where LF differs from zero

and taking into account the assumption on independence of the samples, we get the LF for several k-lookups to N random nodes:

where the observation is the -th smallest normalised distance taken from the -th lookup.

4. Maximum likelihood estimate

The MLE estimator maximises (2). It means that is an integer at which the likelihood sequence stops increasing and starts decreasing. To find it, consider the ratio function and the conditions the conforms to:

- The likelihood is increasing up to : .

- The likelihood starts decreasing after : .

As follows from (2), the ratio function is:

and within rounding error the MLE is the root of equation :

From a practical point of view, the last expression is easier to implement in the equivalent logarithmic form:

Algorithmically, the estimator (3) performs the following steps:

- For each lookup }, which consists of smallest distances to random targets:

- select the maximal distance ;

- calculate logarithmic metric for the normalised distance .

- Calculate average on the metrics.

- Estimate the network size.

As expected, the estimator (3) always produces a result greater than , because is negative. The two edge cases when are nearing to () or to () are also intuitively understandable.

5. Efficiency of the estimate

MLEs are known for their strong theoretical properties. For large sample sizes, they are consistent (converge to the true parameter), asymptotically normal, and asymptotically efficient (achieve the lowest possible variance).

Treating as a continuous parameter, the variance of the efficient estimator [5] is given by Cramér–Rao Lower Bound (CRLB):

where the Fisher Information is obtained by averaging of the second derivative of the log-likelihood (2):

The log-likelihood for N observations is:

where is the gamma function. Differentiating this two times, obtain:

where is the trigamma function [7].

So, the result for minimal variance is:

Next, the difference of trigamma functions in (4) we approximate with:

The approximation (5) is quite good, especially when is large and is small relative to . Indeed, we get (5) using a known series representation [7] and substituting the sum by an integral:

Finally, in practice it is often convenient to normalise estimation error to the estimated value. Substituting (5) into (4) and dividing the result by , we obtain an expression for normalised variance, which defines the potential precision of the MLE estimator:

It is worth noting that in practice when network size is much larger than the normalised variance of efficient MLE is mainly determined by the values of and , rather than . For example, having and , the standard deviation increases only from 10% to 11%, as network size grows from 50 to nodes. Without the dependence on in (6), the standard deviation of the MLE estimator can be calculated as

6. Results of statistical simulation

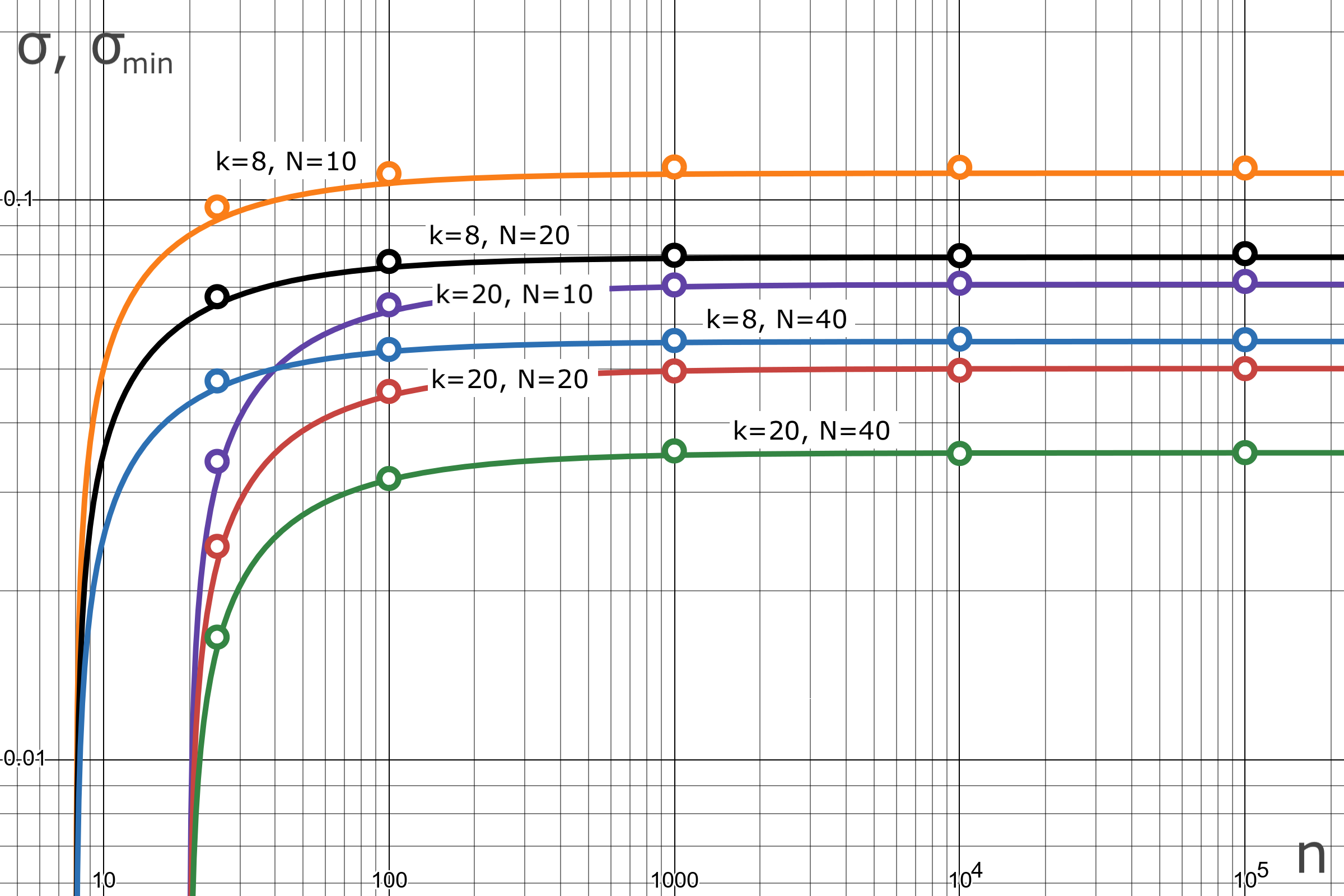

The simulation [8] was performed to compare the precision of the MLE estimator (3) with the theoretical lower bound (6). We process 10,000 estimations for each combination of parameters and network sizes . Each estimation receives statistics of -th order from independent random variables uniformly distributed on . The normalised errors are then used to calculate the sample variance which is the subject of comparison with the theoretical lower bound (6).

Table 1: Simulation results for standard deviation of MLE.

Figure 1: Standard deviation of MLE estimate in comparison with the theoretical lower bound.

Figure 1: Standard deviation of MLE estimate in comparison with the theoretical lower bound.

The solid curves in Figure 1 show the theoretical lower bounds for standard deviation . They are plotted according to (6) as a function of network size. The simulation results, related to the sample deviation , are shown as points for fixed network sizes. The results prove the efficiency of the MLE estimate. For the worst case (), the MLE deviation (10%) differs by less than 1% from the lower bound. At increasing and , the estimate precision and lower bound are practically indistinguishable. For combination () the MLE deviation (1.7%) differs by less than 0.01% from the lower bound.

7. Conclusion

The optimal MLE estimator differs from the estimator obtained in [2] by two significant aspects:

- Only the maximal distances (i.e., statistics of order ) are used from results of -lookups (sets of nodes closest to random targets). The statistics up to are irrelevant to the estimate. We can observe the minimax principle here.

- The logarithms of normalised distances ) (not the distances directly) are used in the calculation of the sample mean to get the grouped observation.

In practice, when network size is larger than , the normalised variance of efficient MLE is mainly determined by the values and (inversely proportional to), rather than . The maximum of the CRLB is reached at big , i.e., the minimax principle is observed again.

The results of statistical simulation prove the efficiency of the MLE estimate. For , and , the variance of the MLE and the theoretical lower bound are practically indistinguishable.

8. Appendix. Joint PDF of the first k order statistics from a sample of size n

We derive formula (1) for the joint PDF of the first order statistics, , from a random sample of size drawn from a standard uniform distribution on the interval .

We aim to find the probability for infinitesimal intervals. This event occurs if one sample falls into each of the intervals , and the remaining samples are greater than .

The samples must be distributed into categories: specific small intervals and one large interval . The number of distinct ways to arrange the samples into these categories is given by the multinomial coefficient:

Let , for is the standard uniform PDF. Then the probability of a single observation falling into an interval is . The probability of an observation falling into the interval is . So, the probability for one specific arrangement is the product of these probabilities:

The joint probability is the total number of arrangements multiplied by the probability of a single arrangement. The joint PDF is the coefficient of the volume element :

9. References

2. Eli Sohl. A New Method for Estimating P2P Network Size. 🔗

3. Wikipedia. Method of moments (statistics). 🔗

4. Wikipedia. Maximum likelihood estimation. 🔗

5. Wikipedia. Cramér–Rao bound. 🔗

6. Wikipedia. Order statistic. 🔗

7. Wikipedia. Trigamma function. 🔗

8. Iurii Kyrylenko. Statistical simulation of the MLE estimator for Kademlia network size. 🔗